Fan-In vs Fan-Out Cheat Sheet

The two system design terms you gotta know

Some system design terms will click immediately, while others won’t until you’ve seen concrete definitions and visuals.

For me, “fan-in” and “fan-out” didn’t make sense until I found some visuals and real-world examples.

If those terms seem nebulous to you, read on.

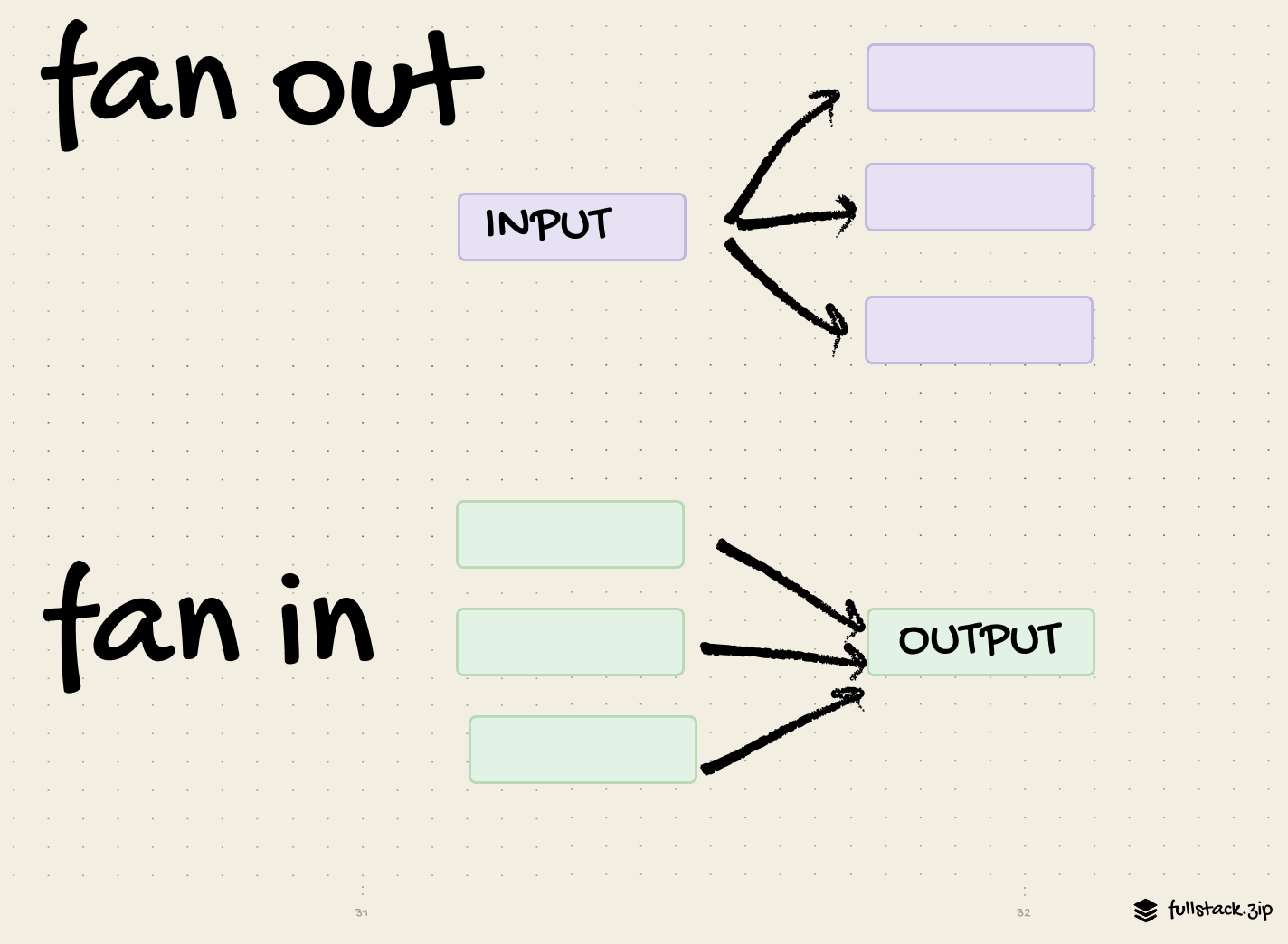

Fan-out splits.

Fan-in consolidates.

Fan-out: one thing triggers many downstream operations (broadcast / scatter).

Fan-in: many things consolidate into fewer results (gather / reduce)

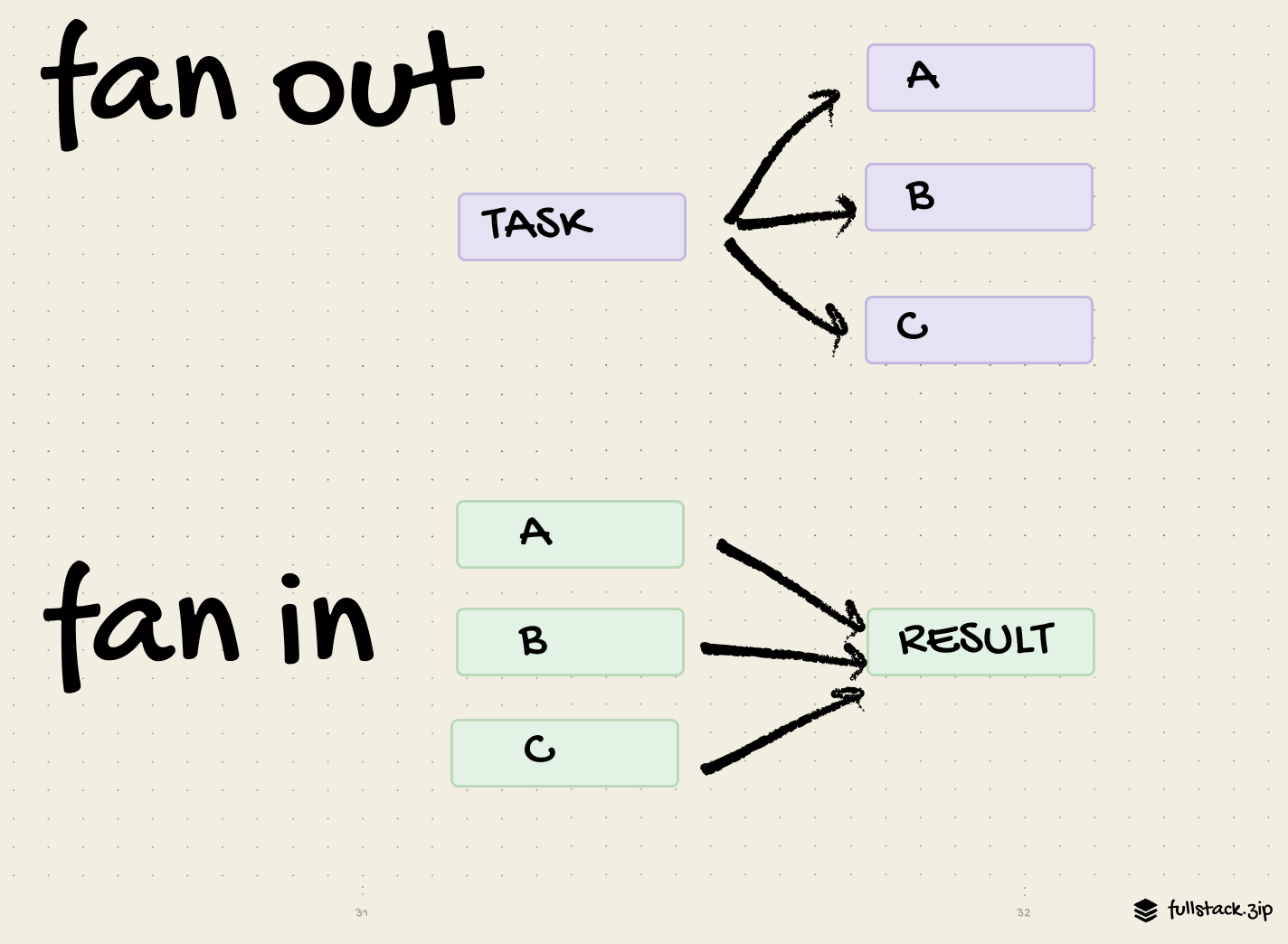

Fan-out splits a large job into smaller sub-tasks run in parallel

Fan-in consolidates outputs from those tasks into a single result.

Inputs are fanned out.

Outputs are fanned in.

Fan-out improves performance by running tasks in parallel.

Fan-in can improve performance by preventing unnecessary work. (But it can also hurt performance in the form of a bottleneck or queue.)

Fan-out happens first (scatter-gather).

Fan-in happens as a result of a fan-out. (But it can happen independently, like log aggregation.)

Fan-out examples

A message in the #announcements Discord channel is fanned out to members.

A user uploads a video, and YouTube triggers tasks for audio extraction, thumbnail generation, and content moderation.

A light switch fans-out the “on” operation for three light bulbs.

When you click “Place Order,” Amazon fans-out tasks for processing the payment, updating warehouse inventory, and sending a confirmation email.

When you tap “Schedule Pickup,” Uber fans-out notifications to dozens of drivers.

When you type “best pizza,” Google fans-out your query to many shards.

Fan-in examples

An API fans-in duplicate queries so that only one is sent to the DB (request coalescing).

Google Flights collects individual search results from multiple airlines and presents a single list to the user.

After each cluster returns its result, Google Search fans-in the results and serves the top 10.

When to fan

Fan-out when: work is independent, parallelizable, and latency matters.

Beware of thundering herd, cost spikes, downstream overload, and partial failures.

Fan-in when: you need a single answer, dedup, or batching.

Beware of hotspots, head-of-line blocking, slowest-task wins (“tail latency”), and complex retries.

(Check out our Discord case-study for a real-world example of these trade-offs.)

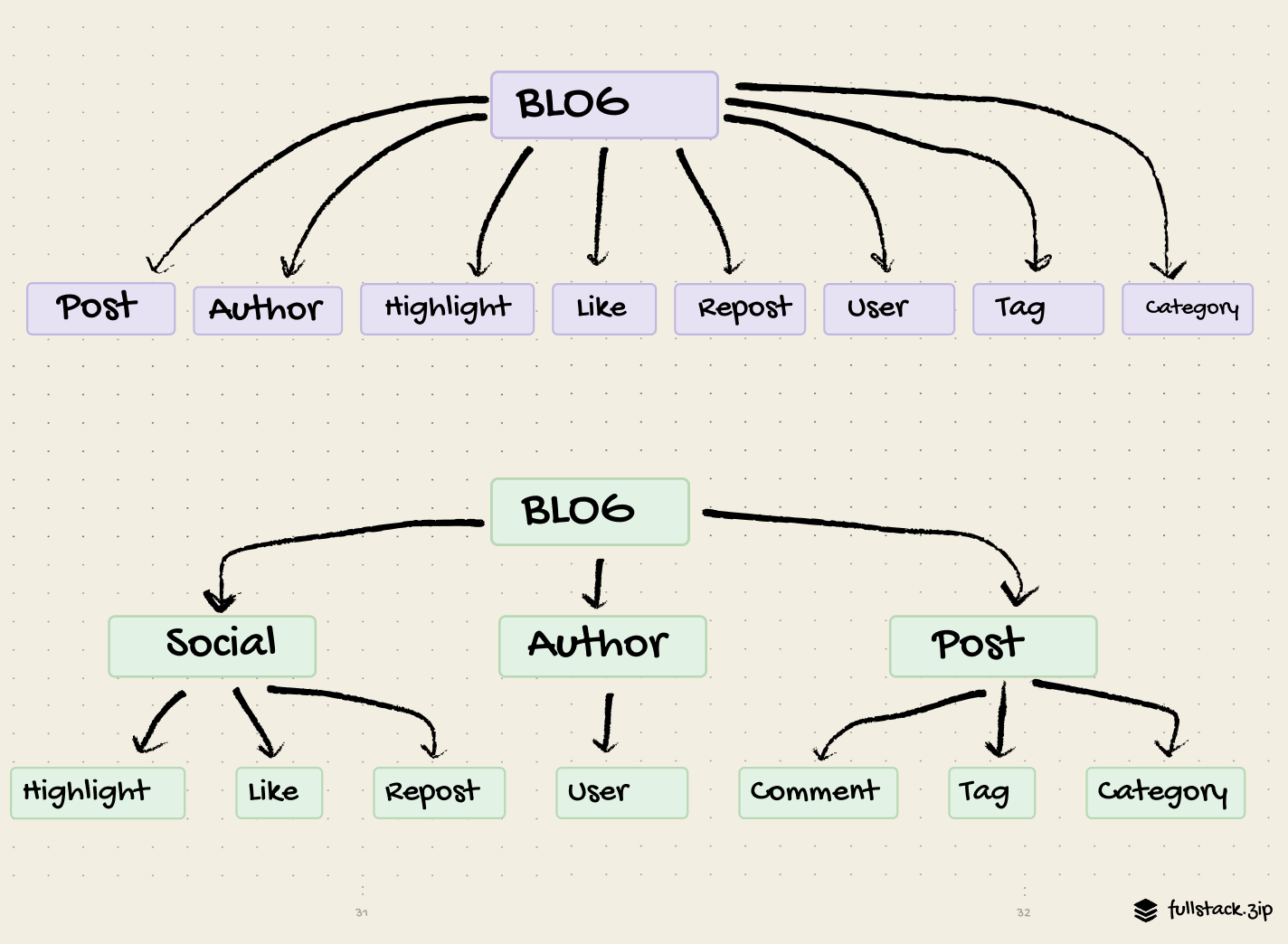

A good principle is to minimize the very high fan-outs and fan-ins.

The examples above focus on data flow, but this also applies to data modeling.

Would you rather maintain the fanned-out (purple) blog?

Hopefully these terms feel a little less weird now.

Forward this to someone on your team who is also combating fan jargon.